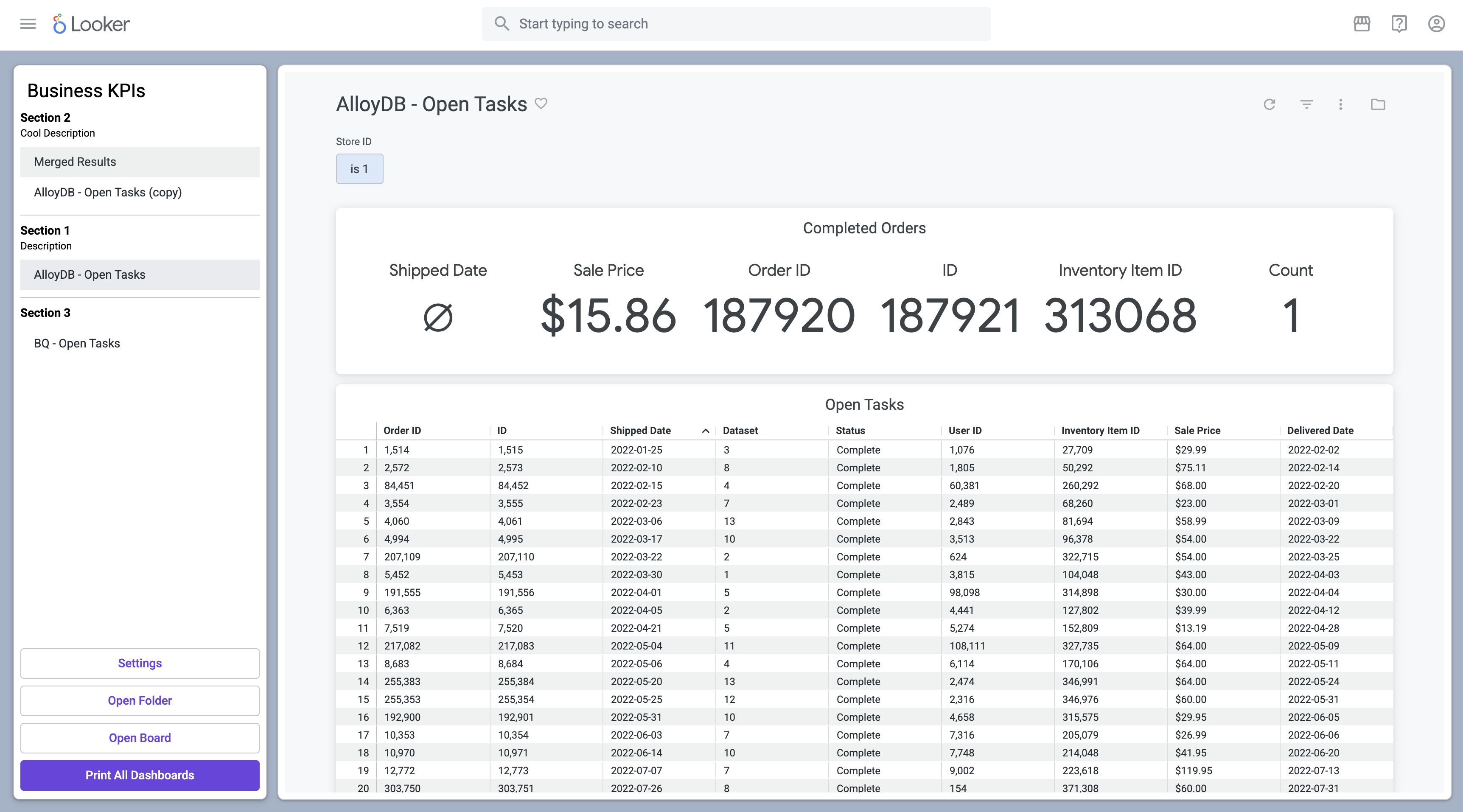

In modern business intelligence, the ability to tailor data presentations to precise business needs is paramount. While Looker provides an extensive suite of standard charts and tables, organizations frequently encounter unique requirements such as specialized network graphs, custom geographic overlays, or highly interactive d3-based visualizations.

Looker addresses this need with its Custom Visualization Framework, which lets you run arbitrary third-party JavaScript code seamlessly within a governed BI environment. However, executing external JavaScript within an enterprise application introduces significant security challenges, primarily around Cross-Site Scripting (XSS), data exfiltration, and unauthorized DOM access.

In this deep dive, we will explore the architecture of the Custom Visualization API, the mechanics of its secure loading strategy, and best practices for safely hosting custom visualization assets.

The Custom Visualization Architecture

At its core, a Looker custom visualization is an event-driven application running inside a specialized container. Rather than directly injecting custom JavaScript into Looker's main DOM window, which would be a massive security vulnerability, Looker decouples the visualization logic from the primary host application.

API 2.0 Lifecycle Hooks and Optimization

Looker's Visualization API 2.0 establishes a structured contract built for modern asynchronous workflows:

- Initialization: An initial setup phase constructs the required DOM container, loads necessary external drawing modules, and initializes state before any data arrives.

- Asynchronous Updates: Rather than blocking the main thread, API 2.0 relies heavily on asynchronous updates. The host dynamically pushes new datasets, configuration options, and metadata to the visualization container.

Optimizing for PDF and Headless Rendering

A key advantage of the API 2.0 architecture is its native support for PDF exports and scheduled deliveries. Looker passes a specialized context to the visualization to signal when it is rendering for a print or export job. Visualizations can optimize this headless flow by:

- Disabling complex or resource-heavy micro-animations.

- Emitting a precise completion signal back to the host immediately once the chart finishes drawing, ensuring the captured PDF is perfectly rendered without arbitrary timeouts.

Secure Interactive Capabilities

Visualizations built with API 2.0 can provide rich user interactions, such as drill menus, dynamic row limits, and cross-filtering, without directly accessing the host application's memory. By dispatching strict, serialized trigger events back to the host, the visualization can securely update filters or open drill overlays within the parent application.

Strict Security and Sandboxing

Executing user-supplied JavaScript inside a high-trust BI platform requires robust defense-in-depth. Looker achieves this through strict iframe sandboxing and isolation.

Iframe Isolation

Every custom visualization is rendered within a dynamically generated iframe that is strictly isolated from the primary application. Looker applies rigorous sandbox attributes to these iframes:

- Script Execution: The sandbox explicitly allows scripts (

allow-scripts) so the custom visualization code can run and compute layouts.

- Restricted Capabilities: By default, the iframe lacks permissions to access the parent window's DOM, access local storage or cookies belonging to the primary Looker domain, or initiate top-level navigation away from the BI application.

- No DOM Access: Prevents Cross-Site Scripting (XSS) and UI redressing, ensuring malicious scripts cannot alter dashboard tiles, capture keystrokes, or inject fake login modals to harvest credentials.

- No Local Storage or Cookies: Secures active session tokens and API keys from being read or exfiltrated to external servers, preventing session hijacking.

- No Top-Level Navigation: Ensures that a compromised script cannot redirect the browser to a spoofed phishing site or unauthorized domain.

Prevention of Data Exfiltration and XSS

By enforcing a distinct origin and sandboxed context, any malicious script embedded within a visualization is isolated. It cannot scrape Looker application cookies, intercept authentication tokens, or manipulate the user interface of the broader Looker application. All interaction with the BI environment must be explicitly serialized and passed via the Chatty message broker, which sanitizes and validates incoming events.

Registration, Loading, and Hosting Strategies

A critical aspect of maintaining security is managing how custom visualization JavaScript files are introduced to Looker. Administrators can register custom visualizations through two primary methods:

- LookML Manifest Files: By defining a

visualization parameter in the project's manifest.lkml, developers can point directly to a repository-hosted file or an external URI.

- Admin > Visualizations Panel: Looker administrators can globally register a visualization via the UI by providing a unique ID, label, and main URI.

Regardless of the registration pathway, administrators should consider the following strategies for hosting visualization scripts securely:

Native LookML Project Hosting

The most secure approach is to bundle your custom visualization JavaScript directly into your LookML repository.

- Pros:

- Absolute Version Control: Visualization versions are tied directly to LookML commits and deployments, guaranteeing perfect alignment between the backend model and frontend view.

- Zero External Dependency: Files are served internally by Looker, preventing failures caused by external network downtime or enterprise firewall blocks.

- Maximum Security: Eliminates cross-origin (CORS) issues and the risk of external supply-chain or DNS hijacking attacks.

- Cons:

- Coupled Deployments: Any minor bug fix to the visualization requires a full LookML repository commit, review, and deployment cycle.

- Repository Bloat: Storing large JavaScript bundles directly in the Git repository can increase repository size and cloning times.

Secure Private Servers and CDNs

Hosting visualizations externally allows teams to manage frontend assets independently of the LookML lifecycle.

- Pros:

- Decoupled Releases: Frontend engineers can iterate, patch bugs, and deploy visualization updates instantly without requiring LookML access or developer mode validation.

- Multi-Instance Sharing: A single visualization bundle can be shared seamlessly across multiple independent Looker instances or environments (e.g., staging and production).

- Edge Caching & Performance: CDNs deliver assets from edge locations close to the user, minimizing latency for large visualization bundles.

- Cons:

- Increased Attack Surface: Compromise of the CDN or unauthorized alteration of the hosted file could instantly inject malicious code into the Looker environment.

- Infrastructure Overhead: Requires strict configuration and continuous monitoring of CORS headers, Subresource Integrity (SRI), and Content Security Policies (CSP).

Safe Data Rendering and Interactive Configuration

In addition to strict sandboxing, the API 2.0 specification provides robust utility layers to ensure data is parsed securely and seamlessly integrates into the parent UI without breaking isolation.

Data Sanitization and Cell Helpers

When Looker pushes a dataset to a custom visualization, the values may contain complex nested objects, unsanitized text, or unformatted numbers. To prevent DOM-based Cross-Site Scripting (XSS) when injecting these values, the API exposes dedicated cell utilities:

- Sanitized Output: Helper functions automatically generate escaped HTML or plaintext strings suitable for display.

- Drill and Cross-Filter Integration: The utility layers allow the visualization to inspect whether specific rows are selected or cross-filtered and trigger associated drill menus without raw DOM traversal.

Dynamic Configuration UI

Developers can specify configuration options, such as custom color palettes, range sliders, and selection dropdowns, which Looker renders in the native explore sidebar.

- Because these settings are registered asynchronously via the event protocol, the custom visualization can dynamically introduce new options based on the incoming dataset without managing the parent application's sidebar state itself.

Event Triggers and State Management

Visualizations can influence the broader Looker query environment by emitting specific serialized messages to the host:

- Filtering and Row Limits: Triggering updates to apply new filter conditions or query limits dynamically.

- Loading Indicators: Emitting start and end loading signals when fetching external sub-assets or performing complex computations.

Auditing and Assessing Custom Visualization Safety

Before deploying third-party custom visualizations or external JavaScript bundles into a production Looker environment, data administrators should verify that the code does not exfiltrate proprietary data.

Here are the recommended methods to assess the safety of custom JavaScript; lkr.dev follows these principles on all our custom visualization repositories:

Static Code Analysis and Source Review

- Keyword Scanning: Inspect the unminified JavaScript source bundle for network-related APIs, such as

fetch, XMLHttpRequest, navigator.sendBeacon, or references to unexpected external domains and URIs.

- Obfuscation Checks: Be wary of highly obfuscated or encrypted code blocks, which are often used to hide malicious payloads or unauthorized data collection logic.

- AI-Assisted Auditing with Gemini: Leverage Gemini to perform rapid vulnerability screening on visualization bundles. By providing the JavaScript source to Gemini and prompting it to act as a strict application security auditor, teams can quickly surface data exfiltration risks, external network calls, or hidden logic accessing browser storage.

Dynamic Network Monitoring

- Browser DevTools: Load the custom visualization in a secure sandbox or staging environment. Utilize the browser's Network panel to observe all outbound requests generated by the visualization iframe while interacting with live explore data.

- Zero-Trust Validation: The visualization should only communicate via the secure Chatty postMessage layer or fetch explicit, approved subresource libraries. Any unexpected cross-origin request should be flagged immediately.

Dependency and Supply Chain Auditing

- Package Vulnerability Scans: If the visualization is built using modern node/npm toolchains, run automated dependency audits to ensure included libraries (e.g., drawing or utility packages) do not suffer from known vulnerabilities or malicious supply-chain injections.

- Open Source Transparency and Self-Bundling: Visualizations developed by communities such as

lkr.dev are completely open source. Data teams can inspect the raw source code on public repositories, independently audit the full dependency tree, and compile/bundle the final JavaScript package themselves before deploying it to Looker. This eliminates the risks associated with downloading pre-compiled third-party binaries.

Conclusion

Looker's Custom Visualization Framework demonstrates how platforms can achieve maximum extensibility without compromising on security. By combining strict iframe sandboxing, cross-frame message passing, and secure file hosting strategies, data teams can safely deploy fully tailored, interactive visualizations that integrate natively into the Looker experience.